Herein I share an experienced developer's first foray into a real-world Rust project. It focuses closely on the type system, and the key insights I needed to begin "thinking in Rust". Written for those getting to grips with the language, it will be especially useful if your background isn't functional programming.

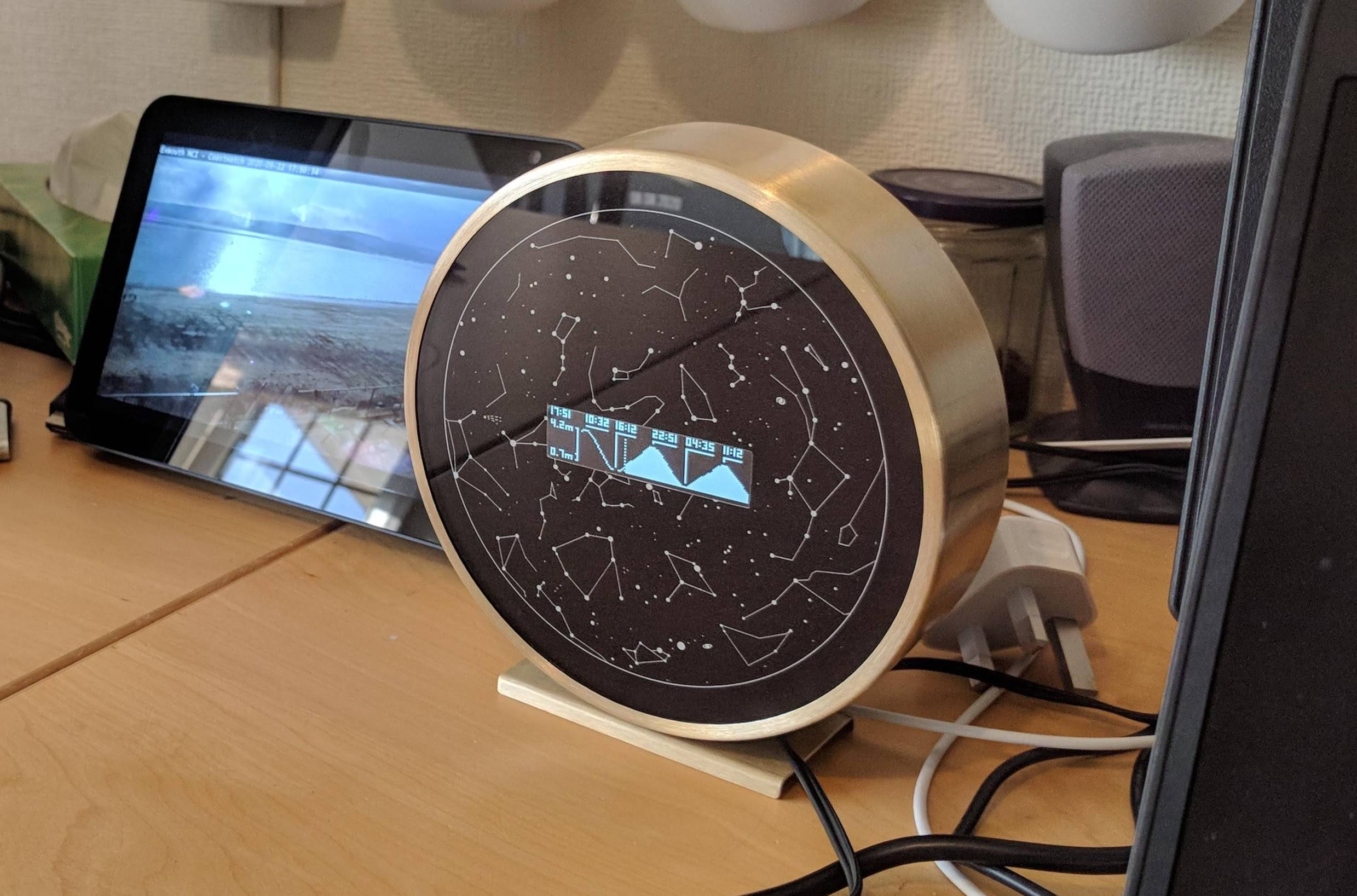

Although originally conceived as part 2 of 2, this can be read as a standalone entry. In part 1, written for a general audience, I detail the maker project, a Raspberry Pi Tide Clock, and the heartwarming story behind its origins.

My First Date with Rust - Know Thy Enemy

As a working programmer with several mainstream languages under your belt (eg Java, C#, javascript, python, etc) it's reasonable to expect that after a bit of orientation with quirks and syntax you'll find yourself somewhat productive. If you know Rust you won't be surprised when I say my experience was... humbling.

The last time I felt so dumb was probably more than a decade ago, as a novice programmer teaching myself Object Orientated Programming (OOP). I think that's the point, new paradigms require effort to build a working mental model for. And depending on where you start on the ladder you might have quite a lot of mental rebuilding to do.

Rust intentionally tries to be "boring". That is to say, its DNA borrows liberally from prior art. If you're familiar with manual memory management (C, C++, Obj-C) you'll probably understand pointers in Rust. If you're familiar with functional languages (Haskell, Elm, Clojure, OCaml) you'll have an immediate start recognising the type system. The reason it aims for boring is because it spends its entire novelty budget on the one unique idea: automatic scope based memory allocation (aka the borrow checker). This is the knockout feature: the performance of C with the safety of garbage collected languages.

Plenty of ink has been written about ownership and the borrow checker. It follows the most unprecedented feature would have the most said about it. There seems to be a common refrain: "It's really hard at first, but stick with it. You'll see, it changes everything". Sounds suspiciously like Vim or Emacs users (please don't fight me). Is it the promised land or just a severe case of Stockholm syndrome?

Nevertheless, extensive reading had prepared me to expect a big ol' messy fight with the borrow checker. We did tussle, but it went better than expected. Ironically, after years of C# game development, performance concerns have taught me much of the internal dialogue needed to reason about memory lifetimes: "Is this allocated on the stack or heap? Is this going to create allocation (garbage collection) pressure? Is this copying data or mutating it in place?"

In this sense, when the borrow checker complained I could at least appreciate what it was trying to protect me against. And as to be expected, it may be a while yet before I fully grok lifetimes. Controversially though, "Rust's expressive type system" put up a stiffer fight than I anticipated.

Stubbing Toes on Functional Programming

Before anyone grabs a pitchfork, this isn't a rant about the unnecessary burden of type systems. Throughout my career, I've favoured statically and strongly typed programming languages. I believe in correctness, and tooling should help you get there. It's why Rust excites me.

Taken to its ultimate conclusion, one arrives at Haskell and other pure functional programming (FP) languages. FP's conceit is trading performance for correctness. As a concerned game developer, this never felt like a bargain I could entertain. Not that it's prevented FP idioms from seeping into the groundwater of other programming languages (see LINQ in C# or map() filter() reduce() in javascript as examples).

You can see signs of this functional DNA written all over Rust too, immutability by default being the most obvious. However, Rust strikes a wonderfully pragmatic balance. Imperative programming is not outlawed, and many escape hatches exist. Rust is, as far as I'm aware, the first language that allows you to deploy functional concepts as zero-cost abstractions. If the borrow checker is the obvious hit single, this is the real album sleeper hit.

I think my undoing was simply that I had a false expectation of equivalence. I'm confident in my type literacy and I can, if we must, talk in-depth about Java design patterns. I've never courted a "proper" functional language, but I recognise their influence. I understood "expressive type system" to be a superset of what I already know. In some respects it is, but in others, it was fundamentally different. I want to share some of my "aha" moments because I haven't seen much written with this lens...

Use Rust Analyzer!

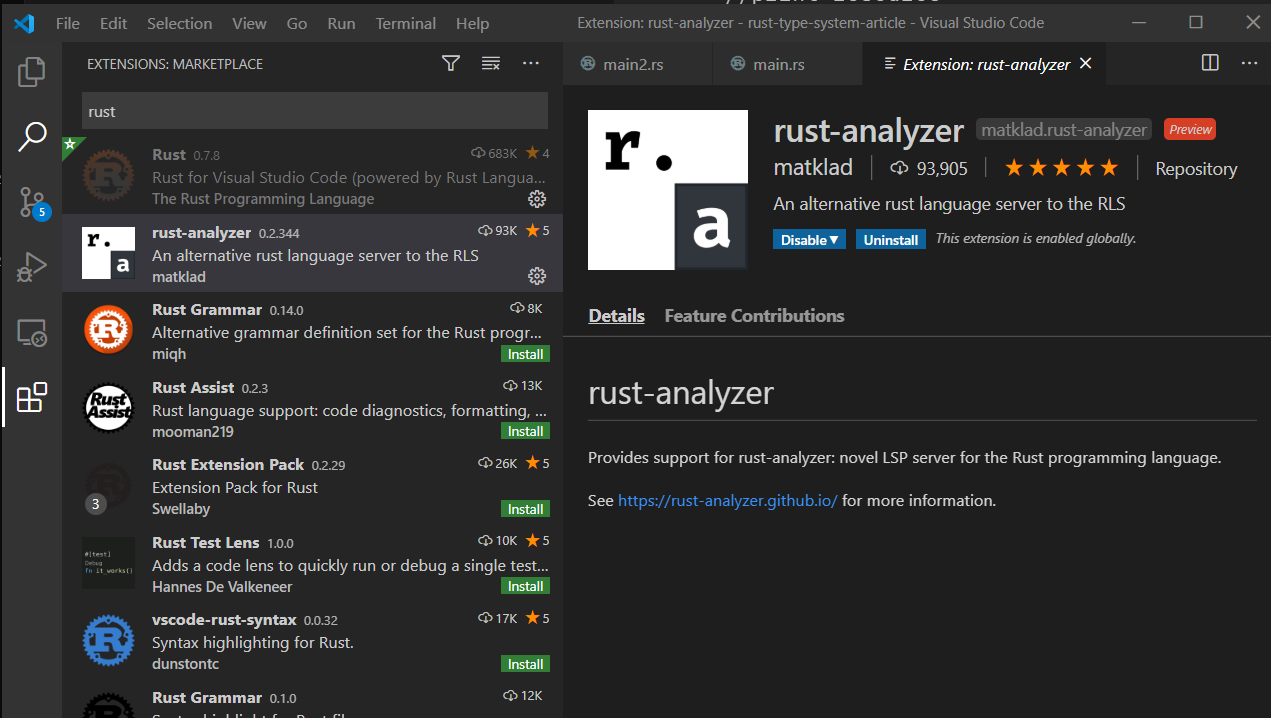

To anyone following my footsteps: setup your dev environment for code intelligence and type hints is my best advice. I intentionally offer it here before any code samples make you glaze over and miss it.

At the time of writing (Oct 2020), I found Visual Studio Code with the rust-analyzer extension to be the most reliable path. I learnt this only after naively installing the Rust extension, which provides a front end for both the earlier RLS and the more modern rust-analyzer. However, in my experience, the native rust-analyzer extension was vastly superior.

Taste Testing Rust's Flavour

If you can express something as a type, the compiler can offer you guarantees about it. An expressive type system allows you to model domains that weren't possible before, often using simpler machinery too. Rust takes this affordance and runs with it. Types are used for everything, and I do mean everything. A lot of problems didn't quite make sense until I learnt to recognise them for what they were: type errors.

However, starting out, there are a few obvious differences I'd put into the "quirks" category. They seem foreign and strange, but acclimatisation comes quickly. I'll only briefly touch on them for those who've never seen Rust before. Exhaustive coverage exists in the Rust book and elsewhere.

Rust doesn't have classes and only uses structs:

pub struct MyData {

pub x: f32, // f32 = 32 bit floating point number type

pub y: f32,

pub z: f32

}

"Cool cool. Structs are kinda like classes anyway"

Methods are defined in impl blocks instead of the struct definition:

pub struct MyData {

pub x: f32,

pub y: f32,

pub z: f32

}

impl MyData {

pub fn init(x:f32, y:f32, z:f32)-> MyData{

//Create and return a new struct

MyData{

x,

y,

z,

}

}

}

"Okay, separate impl blocks seem like a weird choice. Kinda reminds me of C header files..."

Calling methods defined in types is done with a C++ like syntax, e.g. Type::method_name(). However, once an instance exists (aka memory has been allocated), members are accessed with regular dot syntax, e.g. instance.method_name():

fn main() {

let position = MyData::init(0.0, 1.0, 0.0); //Type resolution

let y = position.y; // Instance Resolution

}

There's no inheritance. Shared behaviours are defined in Traits.

pub struct MyData {

pub x: f32,

pub y: f32,

pub z: f32

}

// Define a shared API

trait Drawable {

fn draw(&self);

}

// Implement concrete behaviour on the MyData struct

impl Drawable for MyData {

//Self works like 'this'. Sort of...

//The difference is we have to declare it manually

fn draw(&self) {

//Draw item using self.x, self.y, self.z

}

}

"Okay, so like Trait is just a different way to spell Interface? Gotcha. But no inheritance? That's pretty wild. But composition over inheritance, am I right?"

Now that we've implemented the Drawable Trait, we can call its methods on our struct:

let position = MyData::init(0.0, 1.0, 0.0);

position.draw(); //Draw recieves a reference to position as self

That's enough for now, but know there are plenty of other commendable type features, some of which we'll discuss later.

Where the Functional Rubber Meets the Road

Giant monolithic classes are considered a code smell. A signal that responsibilities need to be separated into separate concerns. Still, OOP maintains the feeling of discrete units. Data and behaviour are contained within the cell walls of the class, or at least within the inheritance chain.

Rust, by comparison, feels inside out, "itty bitty" and piecemeal. What might have been a single class is instead an accumulation of structs and traits, each defining their own narrow window into data and behaviour. In this talk, the creator of Clojure, another functional language, describes this quality as "ad-hoc polymorphism". Using only what you need, when you need it. This granular approach maximises the immutable surface area, mutability is limited to where it's necessary.

In the flame wars of "was OOP a giant mistake?", it's debatable whether Rust allows you to write OOP code. I understand this now not as a wholesale rejection: abstraction and encapsulation remain necessary tools. However, Rust forces you to leave behind features ardents consider harmful, inheritance being the obvious example. Call it "OOP, the good bits" if you will.

Ideas like composition over inheritance or separating data from behaviour have been around for a long time within the OOP world. However, FP encourages new principles, determinism and the avoidance of side effects. Let's look at an example.

Fixing the Billion Dollar Mistake

Tony Hoare, the inventor of the null pointer called it his billion-dollar mistake. Any programmer knows the misery of finding an error log littered with Null Reference Exception. But null values are fundamental, like the oxygen we breathe. Whenever I tried to contemplate an alternative I suffered a failure of imagination. "No null values? Far out man."

For a while, in prior languages, I attempted to answer this question by initialising variables with a default value instead of null. That worked when the default value meant something, otherwise, it just piped junk data into the system. Still, in successful cases, I began to appreciate the guarantee it offered. Any downstream code could trust a variable would be valid and not have to muddy itself with unexpected exceptions.

Software is such that, unfortunately, there will always be cases where initialisation or access of a resource fails. FP provides us with a more complete answer here. It correctly recognises valid|invalid or success|failure as a separate concern from the referencing of data. It does so by shifting responsibility to a separate type.

Let's take a look at a traditional imperative scenario (written in pseudocode):

var item : Item = GetItem();

if(item != null) {

//Do something with resource

print(item);

}

function GetItem : Item () {

if(/*success*/) {

var newItem = new Item();

return newItem;

}

return null;

}

Let's rewrite that in Rust. For the sake of comparison I'm using a non-idiomatic style:

let item_option = get_item();

match item_option {

Some(item) => {

//Do something with resource

println!("{}", item);

}

None => {}

};

fn get_item() -> Option<Item> {

if /* success */ {

let new_item = Item {};

return Some(new_item);

}

return None;

}

What's going on here? If we look at the get_resource() function, we see it returns None when it fails. Is that just a different way to spell null? Well not exactly, the key is the return type Option<Item>. The type Item defines the bit we care about, but what's the Option<...> malarkey around it? Option is a generic structure that comes with Rust, to understand it lets take a closer look:

pub enum Option<T> {

Some(T),

None,

}

We can see this is very simple, it's just an enum with two values, Some(T) and None. The generic placeholder, T, allows us to compose in our payload, as we did with Option<Item>. Similarly, we can see get_item() performs a concrete substitution when it returns Some(newItem), newItem being an instance of Item.

Essentially we have a wrapper around our value that says if it's valid or not. item no longer has any knowledge of its validity. Its existence is proof enough to eliminate the Null state. However to get access to it we need to first tear off the wrapping paper. This is what the match construct is all about:

match item_option {

Some(item) => {

//Do something with resource

println!("{}", item);

}

None => {}

};

It's called pattern matching and it's a switch statement, but for types. Here the Some branch is the only code path with a valid reference to item. The compiler won't let you access item outside of this scope. And that's how an expressive type system fixes the billion-dollar mistake.

Edit 1: Based on reader feedback it would be good to give an example of what idiomatic code might look like.

Edit 2: As highlighted by Reddit commentary, this turned out to be a clumsy example and could be improved. Evidence of the fact I'm still learning myself, I've chosen to leave it up for posterity.

"Declarative" code is less about style, as it is about the promise "this thing's behaviour is consistent" and knowable. To begin we can match directly on the get_item() function call:

match get_item() {

Some(item) => {}

None => None

}

We can also use this pattern within other functions too. Doing so is worthwhile if we want to unlock composable functions:

fn process_item(input: Option<Item>)-> Option<Item>{

match input {

Some(item) => {

/* modify item */

Some(item)

},

None => None

}

}

Rust also provides shorthand, if let is syntax sugar, a concise way to express interest in single branch of a match statement. Putting it all together, we can state the whole problem as a single expression:

if let Some(item) = process_item(get_item()){

println!("Success, item is {}", item);

}

fn get_item() -> Option<Item> {

if /* success */ {

let new_item = Item {};

return Some(new_item);

}

return None;

}

fn process_item(input: Option<Item>)-> Option<Item>{

match input {

Some(item) => {

/* modify item */

Some(item)

},

None => None

}

}

Like all things, both styles have benefits and drawbacks. An exact discussion of which is better, when and why, is for another day. However, by having a single expression of functions, if we know that those functions are "pure", we have some powerful promises about the correctness of our program. This, as I understand it, is FP's whole jam. I'll add, by virtue of mut and mutation, Rust is not pure by default like Haskell. So in the same way you could ask is Rust OOP, you could rightfully ask is Rust FP?

Spotting the pattern

Hopefully, it's clear why Option<Item> and Item are different types. The compiler will give you type errors if you try to use them interchangeably. It's self-evident when you're consuming a method you wrote (e.g. get_item()) and you know it returns an Option<T>. However, when using the std library or 3rd party crates, some innocuous scenarios caught me out. Consider the following:

let my_list = vec![1,2,3];

let first = my_list.first();

assert_eq!(&my_list[0], first); //Oh no, Type error!

Define a list [1,2,3] and get a reference to the first element using the first() method. A perfectly reasonable thing to write in any other language. If I'd had rust-analyzer enabled, it would warn me of the trouble by adding a type hint after the first variable:

let first: Option<&i32> = my_list.first()

Because it's impossible to return the first element of an empty array, first() instead returns an Option<&i32>, an &i32 with an "is this safe?" wrapper around it. Only if the array is populated will its value will be Some(&i32), otherwise it resolves to None. Therefore attempts to consume it directly, as an &i32 (aka a & reference to an i integer of 32 bits), will fail. That's exactly what happens in our assertion above:

//Is the first element, my_list[0], equal to my_list.first()?

assert_eq!(&my_list[0], first);

error[E0277]: can't compare

`&{integer}` with `std::option::Option<&{integer}>`

|

5 | assert_eq!(&my_list[0], first);

| ^^^^^^^^^^^^^^^^^^^^^^no implementation for

| `&{integer} == std::option::Option<&{integer}>`

Rust is full of this type sleight of hand, it crops up everywhere. Being forced to deal with it feels like eating your broccoli. But eating your greens is the foundation of the warm fuzzy feeling FP programmers often cite: "If the code compiles, you can trust the code will run". Rust creates a similar sentiment, although if you cheat using backdoors, you will weaken this promise.

In the above example, like before, you can use a match statement to tear off the Option wrapping paper. Or you can cheat your way out of it by using unwrap():

assert_eq!(&my_list[0], first.unwrap()) //Begone type error!

Here unwrap() is a shorthand for shedding the Option wrapper. However, it does so by choosing to ignore the None path. This means we court the danger of an empty list causing a runtime panic. It's not surprising that most, if not all, of my runtime errors came from choosing sneaky convenience over rigid safety.

Bonus round

The following structure is closely related to Option<T> and is used for any operations that might need to return an explicit error message, like disk IO.

pub enum Result<T, E> {

Ok(T),

Err(E),

}

Ok(T) is semantically equivalent to Some(T), and Err(E) is a None that contains an error of type E. Learning the toolbox used to manipulate these patterns is a fundamental part of learning Rust. However, there's a key feature I want to point out.

Ok(T) and Err(E), just like Some(T) and None, are different variants. In Java or C# this wouldn't be possible, firstly generics<T> aren't allowed on enums, secondly backing fields (or associated data) must be of the same underlying type, commonly an int. In Rust, it's perfectly reasonable to create an enum whose variants are different types:

enum Value {

Number(f64),

Str(String),

Tuple(bool, String)

}

This is a powerful way to model the result of a complex function with very divergent branching logic. Being able to match all possibilities in a single statement can make it simpler to understand the consequences of this code. It's another notch in Rust's "expressive" type system toolbelt.

Idiomatic Rust

There's a subtle difference in the feeling I get from Rust types that's hard to explain. In OOP languages, objects tend to be an accumulation of types. Each superclass and interface aggregates and embellishes on top of the type you're working with. An instance of an object gives you the keys to the kingdom for every public or inherited member. There are also a robust set of built-in tools to transform from one type to another. It's inclusive by default.

This expectation was an initial source of frustration in Rust. I spent a lot of time trying to transform a result by casting it into the destination I needed. However, due to the itty-bitty style I mentioned before, idiomatic Rust is often exclusive by default.

Let's explain this further with a real-world example. The rppal crate provides an interface for a Raspberry Pi's 40 GPIO pins, which are used to communicate with hardware peripherals. To make an LED blink would be a matter of setting the voltage at the connected pin high or low. Similarly, pins can also be used to receive data from the outside world by measuring input voltage from sensors. It's important to know that read and write have mutually exclusive functionality, and this needs to be modelled in software.

The rppal crate is interesting because its history details a refactor to a more idiomatic pin API. I'm going to present a bastardised toy version here because I want to focus on the evolution rather than specifics. First, the old way:

const LED : u8 = 5;

const SENSOR : u8 = 6;

//Config

let led_pin : Pin = gpio.getPin(LED);

led_pin.set_mode(mode::Output);

let sensor_pin : Pin = gpio.getPin(SENSOR);

sensor_pin.set_mode(mode::Input);

//Write output

led_pin.write(Level::High);

led_pin.write(Level::Low);

//Read input

let level = sensor_pin.read();

Seems reasonable? gpio.getPin() collects a pin based off its numbered id. set_mode configures it for output or input. read() and write() then carry out the business we came for. Notice though that once a pin's mode is set, the developer is responsible for upholding the contract. Nothing will prevent the following:

sensor_pin.write(Level::High); //Apply voltage to read-only pin?

Depending on the circumstances, consequences range from harmless to permanent hardware damage. This kind of compromise happens often in the "kitchen sink" approach of OOP, where a child class might have access to inappropriate data or behaviour. Perhaps that data is relevant to a sibling class, but this is a sign of the abstraction leaking. Let's look at how the API is presented after the refactor:

const LED : u8 = 5;

const SENSOR : u8 = 6;

//NOTICE THE DIFFERENCE?

let led_pin : OutputPin = gpio.getPin(LED).into_output();

let sensor_pin : InputPin = gpio.getPin(SENSOR).into_input();

//Write output

led_pin.write(gpio::Level::High);

led_pin.write(gpio::Level::Low);

//Read input

let level = sensor_pin.read();

Notice the into_output() and into_input() methods now return a specialised interface, either the OutputPin or InputPin type. Let's expand the into_input() method:

struct Pin {

pin : u8

}

impl Pin {

pub fn into_input (self)-> InputPin {

InputPin {

pin : self

}

}

}

struct InputPin {

pin : Pin,

}

impl InputPin {

pub fn read (&self) -> Level {

self.pin.read()

}

}

We can see that InputPin is just a wrapper around Pin. The beauty of this means it's no longer possible to call sensor_pin.write() because that method doesn't exist anymore. Specialisation has given us a stronger safety guarantee.

However, it's crucial to note InputPin no longer has a type relation to Pin - they are distinct structs. If InputPin had inherited from Pin, I would have a robust set of language tools to cast or coerce between them. Losing this convenience isn't inherently bad, but it's worth watching out for.

Structs are cheap to create, and Rust goes to town! These into_thing() gymnastics are everywhere. But similar patterns can be used to avoid expensive operations until necessary. You can iterate a list with map() or filter(), and then lazily stamp the results to a newly allocated list with collect(). It applies to mutation transformations too, into_mut() being common. However many of these are by convention, rather than by language design. Their prevalence depends in part on the authors' stylistic choice. This meant I was more reliant on examples and documentation than I am perhaps accustomed to.

Conclusion

These were simple examples, but, hopefully, enough to peel back some of the ways that Rust feels different. That's an important stepping stone towards being able to think in Rust. To write idiomatic code that uses the best of the language rather than just trying to do things the way you've done them before.

This conversation goes far beyond types though. Some old-world patterns that work well in their natural habit, sans the burden of ownership, would be a crucifixion under the ire of the borrow checker. Events come to mind. A rudimentary building block of many programming paradigms, but solving the ownership semantics in Rust would be ruthlessly tedious.

So, is Rust the promised land? I think it's too soon for me to say. I was expecting a pedantic language, but sometimes the pedantry felt stifling. Even working with different number types, something I took for granted, turned into a shouting match. Then again, as my first project in a new language, the vague sense of confidence it wouldn't fall over was unsual and different.

Regardless, Rust feels like a vision of the future. The variety of concepts that have been brought to bear in a single location are incredible. I'm convinced that my brief time with Rust has already made me a better programmer, and for that reason alone I'd say it's worth putting on your radar.

Discussion on r/rust